Want to share this easily?

Check out the Notion page.

By Morgan Lucas (she/her) from this video by Johnny Chivers

We use data migration services to, well, migrate data. But why would we want to do this?

Perhaps...

- We're moving our business to the cloud, and need to shift all of that cold storage we have onsite.

- We want to use it as a backup in cause our infrastructure is out of commission.

- We could have information to share with a 3rd party, and instead of giving access to on-site databases, we put it on AWS to share.

- Created publicly accessible, password-protected database with Amazon Aurora with PostgreSQL Compatibility to migrate to Amazon Dynamo DB

- Managed inbound rules of security group to limit access

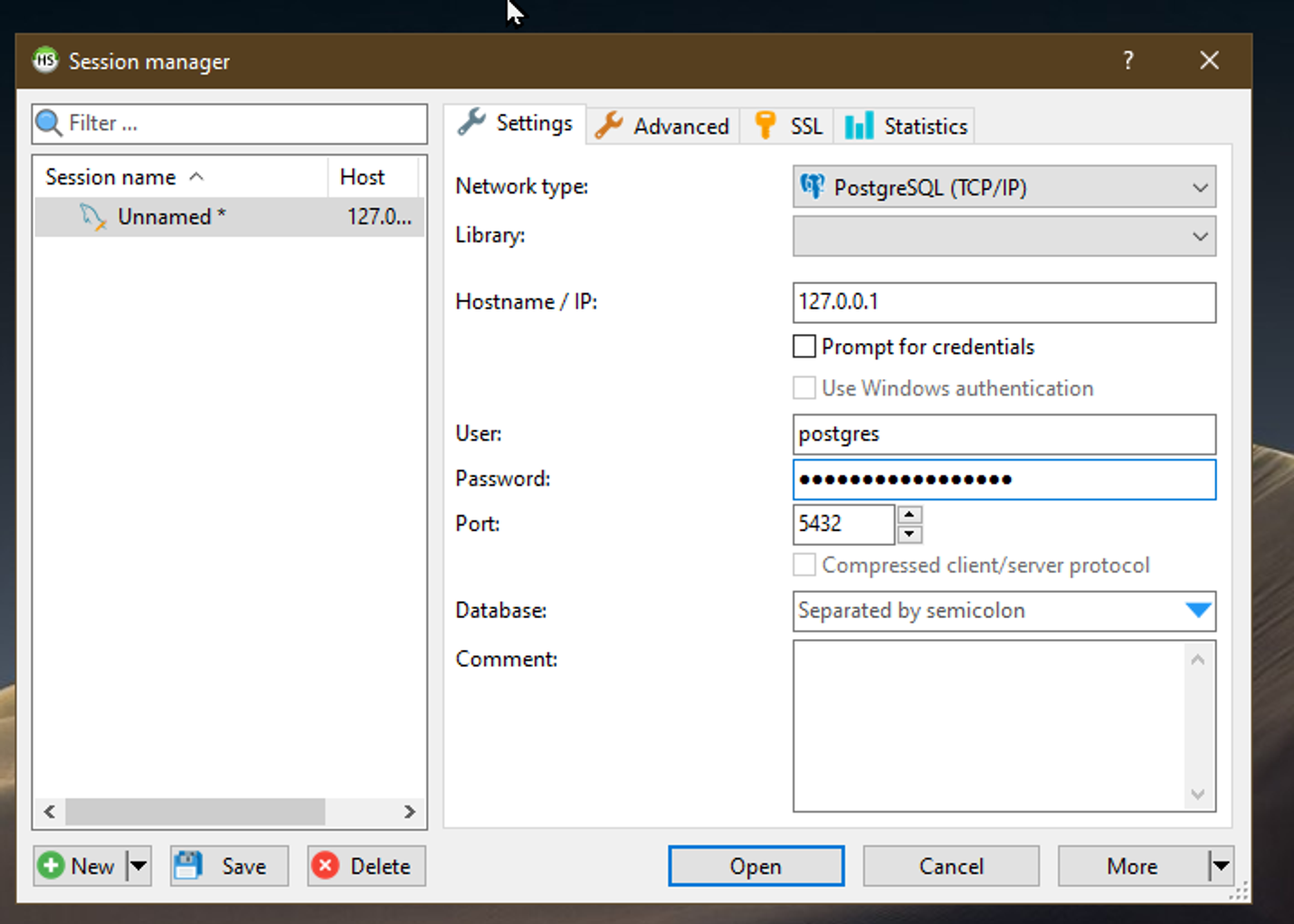

- Used open source software HeidiDB to interact with database via a TCP/IP session and specific URL for DB (Not shown here for security)

- Connected to Aurora PostgreSQL Database

- ran queries that deleted and created tables populated with new information

- Created publicly accessible replication instance to connect to our RDS to initiate database migration

- Created configuration endpoints with existing instance used to migrate database User_Data to Amazon DynamoDB

- Created role to talk to DMS (making sure to click the radio button beneath)

- Successfully connected target (endpoint) to instance (middleman to transfer data)

- Set up database migration task to move from Aurora PostgreSQL Database to Amazon DynamoDB

Find me on Twitter, or my blog. You can Buy Me A Coffee and help me keep writing!

Comments

Post a Comment